if you find moles or skin tags on your body, read about this remedy. genius!...

March 23, 2026

8:21 am

after reading this, you will be rich in 7 days. simple trick...

March 23, 2026

8:26 am

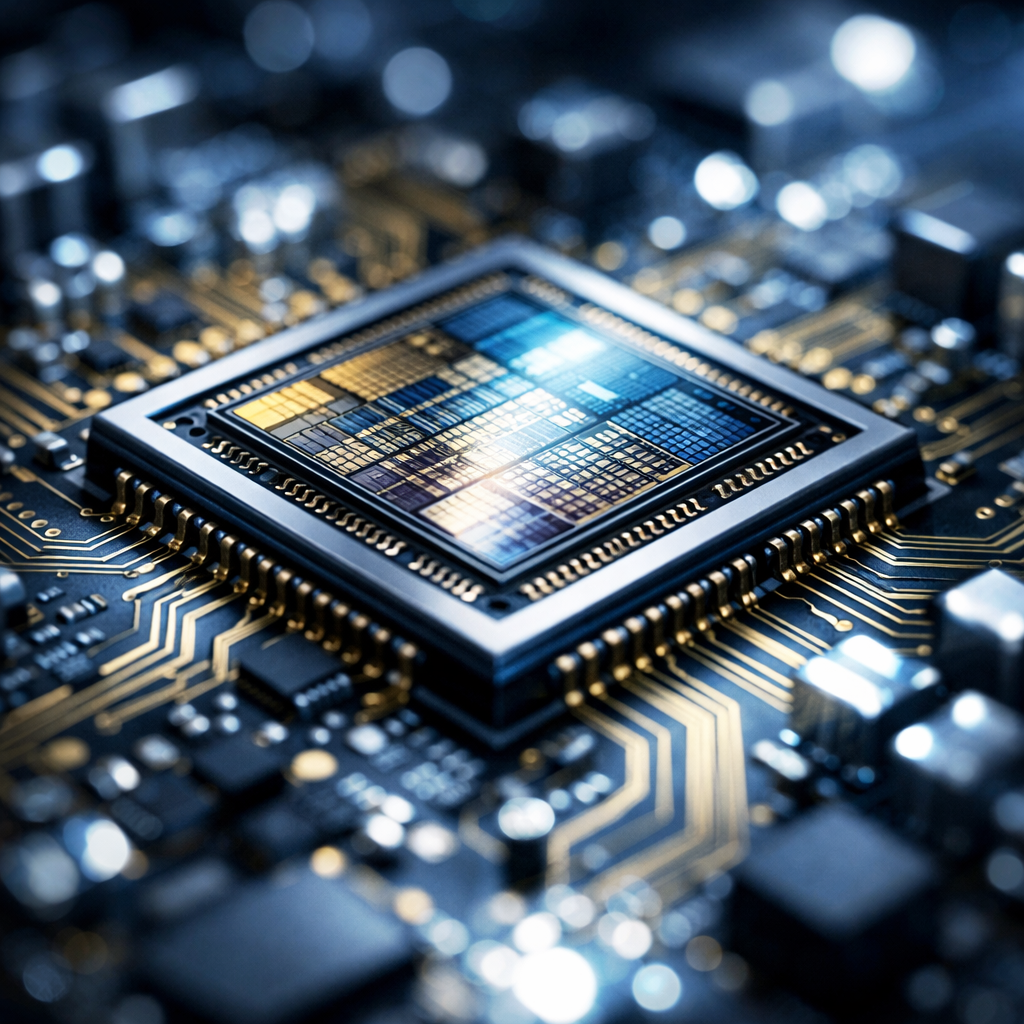

Groq 3 LPU: NVIDIA Just Unveiled a $20 Billion Chip That Isn’t a GPU

March 23, 2026

08:43

At GTC 2026, Jensen Huang didn’t just announce another GPU. He unveiled the Groq 3 LPU, a fundamentally different kind of AI chip built from the $20 billion acquisition of startup Groq. The Language Processing Unit isn’t faster at training models. It’s faster at running them. And that distinction is about to redefine who controls the AI economy.

NVIDIA debuted the Groq 3 Language Processing Unit at its annual GTC conference on March 16, 2026. The chip is the first product built on intellectual property acquired when Nvidia purchased AI chip startup Groq for $20 billion on Christmas Eve 2025, Nvidia’s largest acquisition in history, as reported by Tom’s Hardware and IEEE Spectrum.

The Groq 3 LPU is not a GPU. Where GPUs excel at the parallel mathematical operations required to train AI models, the LPU is purpose-built for inference, the process of actually running trained models to generate responses, images, code, and decisions. Its architecture replaces traditional high-bandwidth memory with SRAM integrated directly onto the processor, achieving 150 terabytes per second, seven times faster than Nvidia’s own Rubin GPU at 22 TB/s.

Recent Posts

worms come out of you in the morning. try it...

March 23, 2026

8:32 am

hair will grow back! no matter how severe the baldness...

March 23, 2026

8:25 am

knee pain gone! i didn't believe it, but i tried it!...

March 23, 2026

8:20 am

this product is putting plastic surgeons out of work...

March 23, 2026

8:30 am

NVIDIA also launched the Groq 3 LPX platform, a server rack containing 128 LPUs. When paired with Nvidia’s Vera Rubin NVL72 GPU rack, the company claims 35x higher throughput per megawatt of power and a target of 1,500 tokens per second for agentic AI communications, according to SiliconANGLE.

The “35x throughput per megawatt” metric may matter more than raw speed. AI’s energy crisis is constraining data center expansion globally. A chip that delivers 35x more AI output per unit of electricity makes previously impossible deployments possible. An AI workload requiring 35 megawatts on GPUs alone could theoretically run on 1 megawatt with an LPU-GPU combination.

If the Groq 3 delivers on its claims, inference costs drop dramatically. That creates a flywheel: cheaper inference means more AI-powered products, which means more inference demand, which means more LPU sales. NVIDIA is building the same lock-in that CUDA created for GPU computing, but for a market projected to be an order of magnitude larger.

Recent Posts

a spoon on an empty stomach burns 26 lbs in a week...

March 23, 2026

8:34 am

the fungus will disappear in 1 day! write a specialist's prescription...

March 23, 2026

8:21 am

america is in shock! it helps to get rid of varicose veins. do it at night...

March 23, 2026

8:37 am

read this immediately if you have moles or skin tags, it's genius...

March 23, 2026

8:31 am

The Groq 3 ships in late 2026. AMD is expected to respond at Computex in June. Intel’s Gaudi 4 is in development. But Nvidia has the software ecosystem advantage: the Groq 3 integrates with Nvidia’s NIM inference software stack, designed to make the LPU the default choice. The AI chip war just opened a second front.

What is the Nvidia Groq 3 LPU? A new AI chip purpose-built for inference, using SRAM memory to achieve 150 TB/s bandwidth, 7x faster than Nvidia’s Rubin GPU. Unveiled at GTC 2026.

How is an LPU different from a GPU? GPUs excel at training AI models. LPUs are optimized for running trained models at scale, generating text, images, and powering AI agents in real time with ultra-low latency.

Why did Nvidia pay $20 billion for Groq? To dominate AI inference, projected to be 10x larger than training by 2028. The Groq 3 delivers 35x more throughput per megawatt, giving Nvidia a complete training-to-inference platform.

Recent Posts

The latest chapter of one of soccer’s most compelling rivalries arrives with real consequences. Real Madrid head to Germany trailing 1–2 on aggregate against Bayern Munich in the UEFA Champions League quarterfinals. With a semifinal...

April 15, 2026

6:42 pm

after reading this, you will be rich in 7 days...

April 15, 2026

6:27 pm

Happiest Places to Work has officially announced the launch of India’s first-ever Workplace Happiness Awards. Scheduled to debut in Mumbai in late July, this pioneering initiative aims to recognize organizations that go beyond standard perks...

April 15, 2026

5:00 pm

worms come out of you in the morning. try it...

April 15, 2026

4:43 pm

A diplomatic dispute between Italy and Israel has escalated rapidly, triggered not by a military incident but by a magazine cover. The April 10, 2026, issue of L’Espresso set off a chain reaction that now...

April 15, 2026

1:47 pm

salvation from baldness has been found! (do this before bed)...

April 15, 2026

1:33 pm

An 86-year-old French woman’s late-life love story has taken a dramatic and troubling turn in the United States. Marie-Thérèse, who moved across the Atlantic to reunite with a man she first met in the 1950s,...

April 15, 2026

1:41 pm

the best product for joint pain has been found! it turned out to be......

April 15, 2026

1:26 pm

The race to build advanced AI for cybersecurity just took a major step forward. OpenAI has introduced GPT-5.4-Cyber, a specialized version of its flagship model designed for defensive security work, days after Anthropic unveiled its...

April 15, 2026

1:36 pm

do this twice a day, and everyone will think you have botox!...

April 15, 2026

1:16 pm

A major workplace misconduct case has emerged from Nashik, Maharashtra, involving employees at Tata Consultancy Services (TCS). Multiple workers at a business process outsourcing (BPO) unit have alleged sexual harassment, coercion, and intimidation over a...

April 15, 2026

1:32 pm

a spoon on an empty stomach burns 26 lbs in a week...

April 15, 2026

1:11 pm